The customer is one of the global leading metals and mining corporations, with their major products including aluminium, diamonds, copper, gold, industrial minerals, etc. They were looking for skilled resources for their project to ingest 7 varied sources with different formats from 7 different vendors and consolidate and standardize the data in a single database. This would further help them in enhancing their marine safety dashboard.

The Need for Cloud-based Data Lake

The customer has been looking for a partner to assist with their project to build an AWS infrastructure as well as a custom code to ingest and cleanse these data sources and save them into a relational database. The DataLake on Cloud Infrastructure is meant to consolidate the data gathered from multiple unformatted sources in the form of complex Excel Spreadsheets and APIs (Structured and semi-structured)

This consolidated data must then be organized into a user-accessible database, thereby updating its Marine Safety Dashboard automatically. The customer’s Marine Safety data was previously emailed/collected by their Health and Safety Manager, Marine Technical Ops Manager, and Voyage Operations Manager. The data sets include CRM reports, FAI injuries & incidents, recordable injuries, open action & internal ship inspection tracker, inspections, ME incidents, restricted vessels, etc.

Each of these data sets was being sent in text, pdf, or excel format every month to the respective manager. The data was then collated by individual teams into individual ungoverned spreadsheets for conducting the required reporting & analysis. Top challenges to the development of the infrastructure included:

- Restrictions in the deployment environment (i.e. interfacing with vendor API’s or portals)

- Lack of negotiation power to influence vendors to provide data in the desired format at the desired frequency

- Requirements to change internal data collection/entry process

- Lack of pooled resources to build the product

The Solution

The customer engaged with Blazeclan, a Premier Consulting Partner of AWS, to help them with their requirements and evade the challenges in building a consolidated data infrastructure. This is intended to offer the customer a solution to manual consolidation and governance of data, data visibility across teams, and automated reporting. Also, the solution will increase the frequency of updates, enabling Analysts and Managers of the customer to remain focused on data analysis and implementation of preventative safety measures.

The Approach to the Solution Involved

Setting-Up Domain Address: This is to receive the files for processing and is carried out by the customer. The customer registered a new domain in the AWS SES section and configured an email address to receive the files.

Capturing the attachment from the email: The email address registered in SES is configured to store the emails into a particular folder in an S3 bucket. Whenever a new email arrives, the push to the S3 bucket invokes a Lambda function that is responsible for extracting the attachment that exists in the email. Every Attachment is then verified and placed into an individual source folder, according to the naming convention provided by the customer. This stage is called the Raw stage.

Cleansing the source files: Every push action in the raw stage invokes individual source lambda functions that are responsible for converting the data set and files in a common format which is easier for processing. This also includes the normalisation of the data such as common date formats for all the sources. This stage is called the Cleansing stage.

Ingestion of the cleansed files: In this process, the files from the cleansing stage are converted or modified according to the agreed data model essential for providing the holistic picture of the dataset from all the sources. This includes identifying the key columns which are required to join with other sources. It is in this stage which is called the Ingestion stage, the data is pushed into a database or data warehouse.

The implemented solution will allow the customer to send all the required email attachments to a single email address. This triggers a series of automated processing pipelines to process the files according to the data model and push it into the data warehouse. The data is consumed in a form of report which highlights the port and vessel incident statistics.

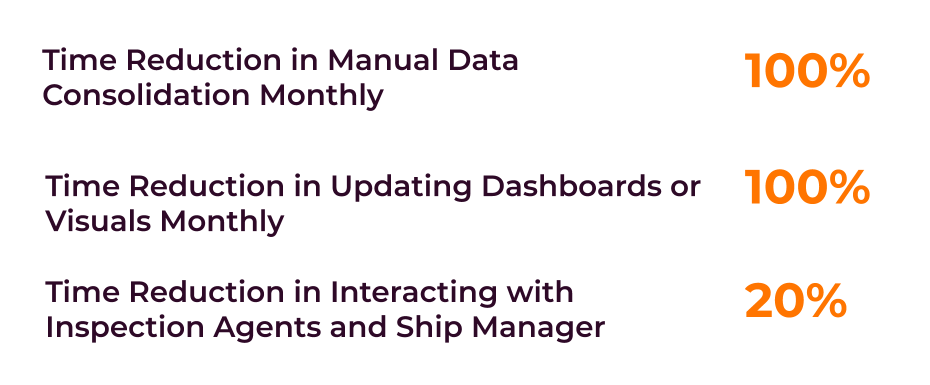

Benefits Achieved by the Customer

- High Scalability: The data lake solution provided the customer with the ability to scale their infrastructure according to the changing business demands. The solution further enabled a new auto-scaling feature with minimum or zero downtime.

- Automation: Automated data collection, storage and management enabled the customer’s application teams to scale their deployment speed. Automation and data ingestion enables the customer to have a consistently updated view of business performance and customer behaviour.

- Analytics-Driven Business decisions: Consolidation of all data sources in a single platform enabled the customer in making efficient decisions.

Tech Stack

| Amazon Lambda | AWS SES | Amazon EC2 |

| Amazon RDS | Amazon SNS | Amazon S3 |

| PostgresSQL | ||